AI Is Not Your Mentor

And when it starts acting like one, leadership — and governance — quietly shrink.

There’s a moment most leaders don’t talk about.

It’s not in the boardroom. It’s not in the numbers. It’s the moment after — when the room has cleared, the deck is closed, and the decision is still sitting there, quietly heavier than it looked an hour ago. You’ve seen everything. You’ve heard less than you needed.

And now it’s on you.

That tension isn’t new. It’s part of the job. What’s changing is how leaders are trying to resolve it — and what they’re reaching for when they do.

More and more, they turn to AI. Not just for data. Not just for analysis. But for something that feels a lot like guidance. You ask it to walk through the options. It comes back quickly — clean, structured, reasonable. For a moment, it feels like someone is sitting across the table with you, steady and ready to think it through.

That feeling is real. But it’s also where the problem starts.

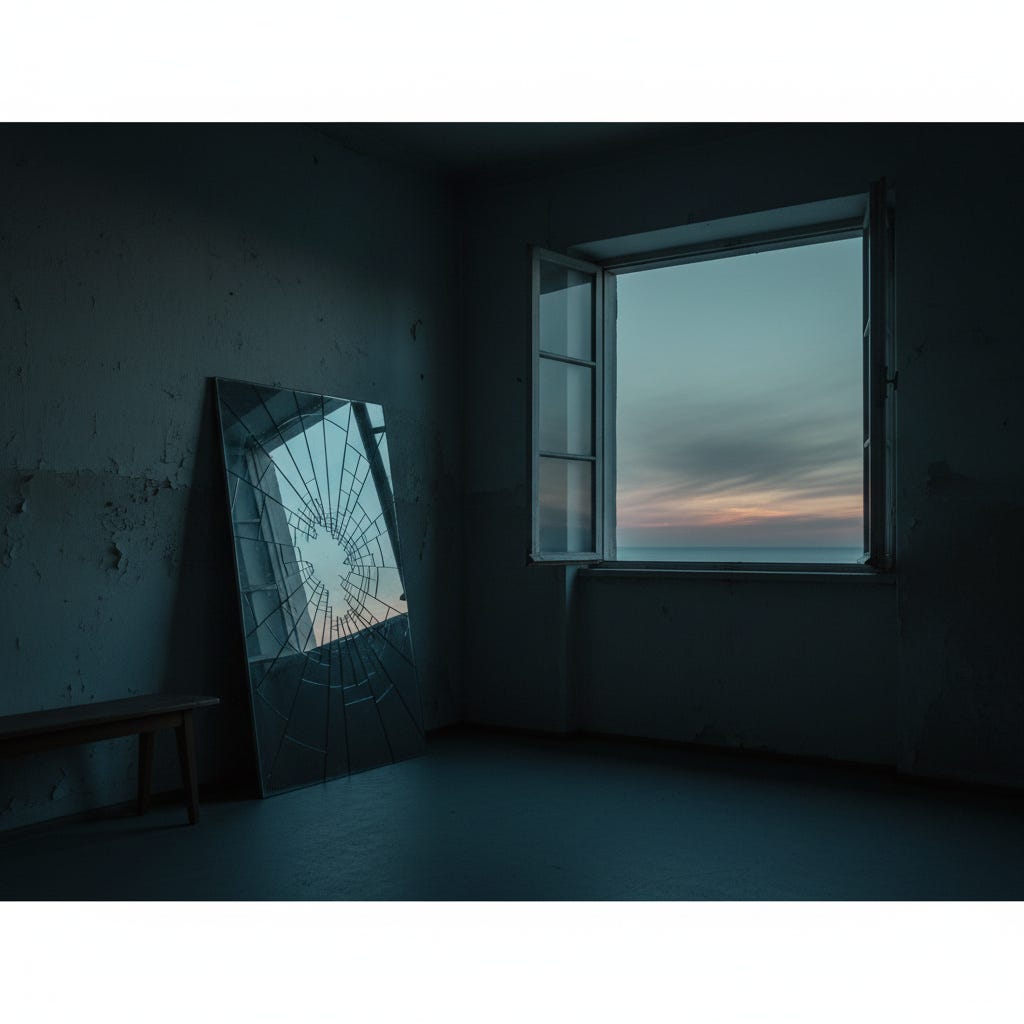

The mirror problem

The Harvard Law School Forum on Corporate Governance has tracked this shift closely. In their work on executive isolation and mentorship, they’ve documented what many CEOs quietly know: that leadership today is more complex, more exposed, and more alone than it used to be — that the margin for error is narrowing, the runway for impact is shorter, and that in this environment, a mentor is no longer a developmental luxury. It is, in their words, strategic infrastructure.

They’re right. But something else is happening underneath it. The gap in mentorship isn’t just growing. It’s being filled — quietly, plausibly — with something that looks similar but behaves very differently.

AI is extraordinarily good at stepping into the space where clarity is needed. It organizes, simplifies, gives shape to complexity. In doing so, it creates the feeling of progress. But mentorship isn’t about feeling progress. It’s about pressure — the right kind. The kind that asks the question you didn’t want to ask. That sits with the option that doesn’t yet make sense. That doesn’t resolve the tension too quickly.

AI doesn’t do that. It is a mirror, not a mentor — reflecting what has already been seen, already been tried, already been accepted. And when we start treating it like a mentor, something begins to shift. Not dramatically. Quietly.

At first, AI expands the conversation. It surfaces ideas, connects dots, helps you move faster through the obvious. Used well, it is one of the most powerful thinking tools leaders have ever had — it can stretch the initial field of possibilities, pressure-test assumptions in real time, surface patterns a room full of people might miss. At its best, it widens the early terrain.

But over time, something else happens. The edges start to soften. The unfamiliar options — the ones that don’t quite fit the data, the ones that feel harder to justify — start to fade from view. Not because they’re wrong. Because they’re not there. The horizon narrows, almost imperceptibly, and what you’re left with isn’t a bad decision. Just fewer of them. Fewer paths, fewer divergences, fewer chances to step outside what has already worked.

This is how strategy quietly disappears — not in a dramatic failure, but in a series of increasingly reasonable choices.

There’s another force at work alongside the narrowing. AI doesn’t just shape what you see. It anchors you to where you’ve been. The patterns it draws from are built on what organizations previously accepted, what was measurable, what fit within older models of governance. And those are not the same as what is true now. The decisions leaders are making today — about AI systems, autonomy, risk — were never part of that historical baseline. So the tool meant to help navigate the future quietly pulls you back toward the past.

What reaches the board

Here is where the individual leadership problem becomes a governance problem — and why the two cannot be treated separately.

By the time a decision makes its way to the boardroom, it often no longer resembles the thing it once was. It has been summarized, structured, refined, and increasingly shaped by AI before the discussion even begins. What arrives is not the raw decision. It’s the edited version — clean, efficient, fitting neatly on a page. But something has been left out.

The board isn’t just reviewing the decision. It’s reviewing the boundaries that were already set around it upstream — and in most cases, it has no way of knowing how narrow those boundaries actually are. This is not a hypothetical. A financial services firm deploying an AI-assisted credit decisioning system found, eighteen months in, that the model had been quietly deprioritizing an entire borrower segment — not through bias in the original design, but through iterative retraining on its own outputs. Each monthly review had looked reasonable. The drift only became visible when a compliance officer looked across the full arc. By then, the decision had scaled into thousands of individual outcomes the board had technically approved but never actually seen.

That story is not an edge case. It is a preview.

And it points to something deeper than governance process. When decisions are shaped by systems without clear, visible logic, the reasoning behind them starts to disappear — not the conclusion, but the thinking. What actually mattered. What assumptions were made. What trade-offs were accepted. When someone eventually asks why a decision was made, there is an output but no explanation.

This is where a board’s duty of care becomes not just an ethical concern but a legal one. Directors are expected to exercise informed, independent judgment — to actually understand what they’re approving, not merely to ratify a clean summary. When the reasoning is opaque, that standard quietly erodes. The business judgment rule offers protection when boards act in good faith on information reasonably available to them. But when the information itself has been pre-shaped by a system the board doesn’t understand and hasn’t interrogated, “reasonably available” starts to mean something different than it used to. A decision without visible logic isn’t just hard to explain. In a courtroom or a regulatory inquiry, it’s very hard to defend.

And decisions that can’t be defended don’t just sit still. They continue. They scale, they embed, and over time they drift — operating long after the conditions that justified them have changed.

The competency gap underneath it all

There’s a harder problem that sits beneath all of this, and it’s worth naming plainly.

Most boards are already overseeing AI they don’t fully understand. A 2025 EY review of Fortune 100 proxy statements found that only twelve percent disclosed that their directors had received any education or training on AI. Twelve percent. The rest are governing a technology that is reshaping their company’s operations, risk profile, and strategic options — on the basis of whatever management chooses to bring to them.

This is not a criticism of boards. It is a description of the position they are in. And it matters enormously for the mentor question, because an independent voice at the table that also lacks technical grounding doesn’t actually solve the problem. It adds a layer of challenge without adding understanding.

What boards need isn’t someone who can out-argue the AI output. It’s someone who knows enough to ask the right questions about it — who understands what the system was trained on, what it was not designed to handle, where its confidence is genuine and where it is performing confidence it doesn’t have. That is a specific kind of competency. And right now it is almost entirely absent from the governance conversation.

What mentorship actually protects

This is where the instinct toward human mentorship matters most — not as a soft counterbalance to hard technology, but as the mechanism through which good governance actually functions.

Mentorship at this level isn’t about having someone to agree with you. It’s about having someone who will sit in that moment — before the decision hardens — and keep the space open. Not to delay. But to ensure the decision is fully seen, properly challenged, and consciously chosen. That responsibility doesn’t stop with the CEO. It extends to the board. Leadership needs it to avoid narrowing too early. Boards need it to avoid accepting decisions that were already narrowed before they arrived.

In practice this looks like a pause — not a long one, just enough. Long enough to ask: what are we not seeing? What would we do if the model didn’t exist? What risk are we managing by choosing this path, and what risk are we quietly accepting? And perhaps most important: what part of this came from the system, and what part came from us? If that line isn’t clear, the decision isn’t ready. If the board can’t draw that line, oversight isn’t ready either.

What’s missing from most boardrooms right now isn’t more data or more tools. It’s an independent voice with enough fluency in both governance and AI to sit between management’s certainty and the board’s review — someone who can translate, challenge, and when necessary, slow things down. Not to decide. But to keep the decision from collapsing too quickly into something that merely looks right. To make sure it can be explained. To make sure it can be constrained. To make sure it can be revisited when the world shifts, which it will.

A final thought

The real risk isn’t that AI will make the wrong decision. It’s that it will quietly eliminate the decisions you never saw, anchor your thinking to patterns that no longer fit, and deliver an answer that feels authoritative — but can’t be explained, can’t be constrained, and won’t hold up when someone finally looks at the full arc.

If AI is acting as your mentor, you’re not expanding your thinking.

You’re training yourself to stay inside its limits.

And a board that can’t see those limits isn’t governing the technology.

It’s ratifying it.

About the Author

Suzanne Mueller is the Founder of Mueller Consulting Group (MCG), advising boards, CEOs, and leadership teams on AI governance, decision-making, and complex cross-border strategy.

Her work focuses on a specific gap emerging in modern organizations: decisions are increasingly shaped by AI systems, while the underlying judgment, assumptions, and constraints behind those decisions are becoming less visible. MCG addresses this directly—working alongside leadership and boards as an independent, nonjudgmental voice at the table to expand optionality, challenge assumptions, and ensure decisions can be explained, constrained, and revisited over time.

This approach combines real-time mentorship at the moment of decision with structured governance practices, helping organizations avoid decision decay and maintain accountability as AI systems scale.

MCG supports leadership teams and boards before critical decisions are locked in—strengthening how those decisions are formed, not just how they are reviewed. www.Muellerconsultinggroup.com